|

|

Does Exploratory Testing Have a Place on Agile Teams? Exploratory testing—questioning and learning about the product as you design and execute tests rather than slavishly following predefined scripts—makes sense for many projects. But does it make sense for agile projects? In this column, Johanna Rothman examines how exploratory testing might work on an agile project.

|

|

|

|

Secrets to Automated Acceptance Tests Has your team been on the search for a fully automated acceptance test? Before you set out on that adventure, check out some of the accomplishments and perils behind the quest for complete automation, as explained by Jeff Patton in this week's column. Fully automated acceptance tests may seem like the solution to many problems, but you should know that it comes with a few problems of its own.

|

|

|

|

Surveying the Terrain Bug logs and testing dashboards are great reports for testers, but sometimes these reports simply fall short of communicating key information, to stakeholders, such as why testing is blocked. In this column, Fiona Charles explains that when sharing information with stakeholders, it's best to use their language and create a report that maps out the system's current status. Fiona's solution: survey reports.

|

|

|

Training Test Automation Scripts for Dynamic Combat: Strikes Dion Johnson use the martial arts metaphor four common issues with automated tests and how test automation specialiasts can "train" their scripts to identify, capture, and handle these problems. In this week's column, Dion talks about how to make develop test automation scripts that handles dynamic paths within an application—which he call "strikes."

|

|

|

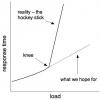

Peeling the Performance Onion Performance tuning is often a frustrating process, especially when you remove one bottleneck after another with little performance improvement. Danny Faught and Rex Black describe the reasons why this happens and how to avoid getting into that situation. They also discuss why you can't work on performance without also dealing with reliability and robustness.

|

|

|

|

Testing COTS: When, How, and How Much? Testing processes and practices are well defined and generally understood for internally developed applications, but what about those that are licensed from third parties? Granted, the vendor has responsibility for testing its own products, but the possibility of the software failing still exists and can be costly, even devastating; blaming others offers little consolation. If you rely on a commercial off-the-shelf (COTS) application, where does your trust in the vendor end?

|

|

|

|

An Uncomfortable Truth about Agile Testing One characteristic of agile development is continuous involvement from testers throughout the process. Testers have a hard and busy job. Jeff has finally starting to understand why testing in agile development is fundamentally different.

|

|

|

|

Pack Up Your Troubles Who likes working on troubled projects? Fiona Charles does. In this column, find out why Fiona sometimes seeks out such projects and how she maintains the right frame of mind to allow her to solve problems creatively and devise tactical solutions to project issues. More importantly, you, too, can learn how to enjoy troubled projects and develop your project skills.

|

|

|

|

Training Test Automation Scripts for Dynamic Combat: Self Defense Dion Johnson use the martial arts metaphor four common issues with automated tests and how test automation specialiasts can "train" their scripts to identify, capture, and handle these problems. In this week's column, Dion talks about how to make develop test automation scripts that defend themselves from problems and failures.

|

|

|

|

Making Sense of Root Cause Analysis Applying Root Cause Analysis (RCA) to software problems is fundamentally different from applying it to other engineering disciplines. Rather than analyzing a single major failure, we are usually analyzing a large number of failures with software. In this column, Ed Weller explains how to use RCA to your advantage.

|

|