Mobile usability goes a long way in enhancing end-user app acceptance. But usability starts with the user, and users differ in terms of knowledge, interests, goals, and so on. This article discusses some core usability characteristics that matter to customers, and how test engineers can understand and achieve them.

Smartphone applications have been booming since the advent of iPhones. In October 2015, there were nearly two million apps available for download in the iTunes App Store. If we combine this with Android and Windows Phone submissions, the numbers are even more mind-boggling.

While a few apps go viral and get downloaded thousands of times, many do not generate even a few users. Apps can flop because the content is not interesting, but there’s another prevalent (and avoidable) reason for failure: because they are too difficult to be used on a mobile device.

Usability starts with the user, and users differ in terms of knowledge, interests, goals, and so on. As test engineers, we must ask questions about the app users, their mobile tasks, the environments they work in, the types of devices they use, and how tech-savvy they are.

Compuware conducted a survey in October 2012 about how customers react to poor mobile app experiences. 48 percent reported they would be less likely to use the mobile app, 34 percent said they would switch to a competitor’s app, 31 percent would tell others about their poor experience, and another 31 percent said they would be less likely to purchase from the app company.

Clearly, usability is an important attribute to account for. This article discusses some core usability characteristics that matter to customers, and how test engineers can understand and achieve them.

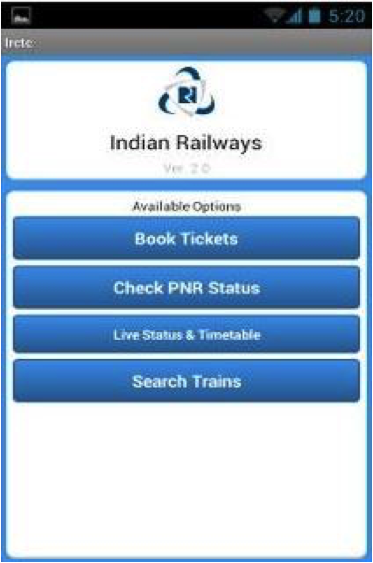

Overall app simplicity: The Indian railways mobile app IRCTC is widely used, and one thing to applaud is how simple its user interface is. All its elements are projected well for users of varied skill levels. Its main page, pictured below, is straightforward and easy to navigate.

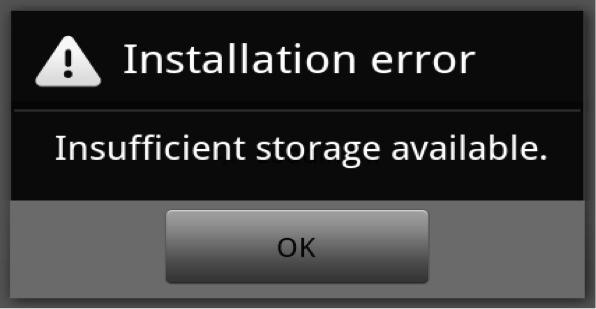

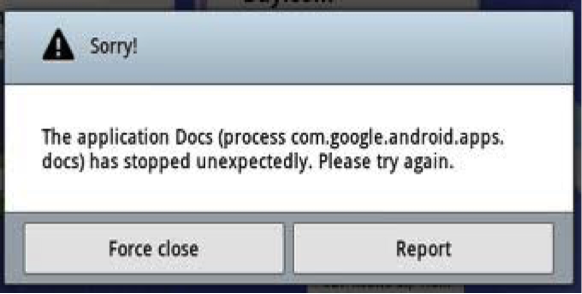

Helpful error scenarios: Errors should be easy to understand, firstly, and secondly, easy to recover from. In the first error dialog box below, the user should be able to understand that there is not enough storage for the application to install. But in the second error message, the issue isn’t clear, and the user would have a more difficult time troubleshooting.

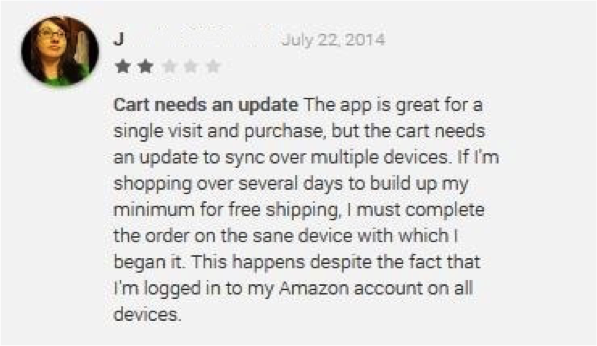

Workflow efficiency and synchronization: A user uses an app to get a certain job done. In the example below, the Amazon app user synced her app on multiple devices. However, when she built her cart on one device and accessed it on another, the cart was empty. So, while it was good for a single visit, it failed on visits across devices. This disruption would frustrate anyone.

End-to-end user satisfaction: My company recently launched an iOS application that teaches kids basic mathematical concepts through interactive games. As you can see on the screen below, the app is simple, and students learn small pieces of information on every page. As part of our user testing, we actually brought in kids to test the app in order to gauge overall user satisfaction.

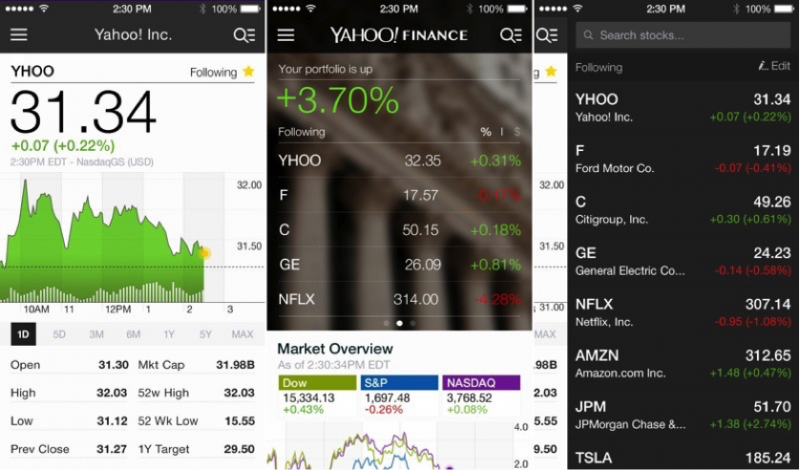

On the other hand, consider the Yahoo! Finance app. The creators knew the app will be used by busy professionals who would want all possible details on a single page, even if it means a cluttered screen.

The type of users and how they will interact with your product have to be taken into consideration for each app.

As test engineers, we must understand that mobile applications differ from traditional desktop and web-based apps in a number of ways. Correspondingly, the test focus areas also need to differ. Many areas require special attention, but let’s restrict our discussion to just five elements: functionality, context, range of devices, data entry methods, and multimodality.

Let’s use the Skype mobile app as the first example. Typically, a mobile device would have two speakers: one on the front of the device for normal calls, and one in the back for a loudspeaker. The Skype app was switching normal calls to loudspeaker mode the moment a user moved the device from his palm to his ear. This was not only uncomfortable, but also embarrassing in public. This is an example of functionality leading to a bad user experience.

Context can be defined as the totality of a consumer’s mobile experience. This includes the situational, attitudinal, and preferential habits of the user. Mobile context is constrained by factors such as screen sizes, users’ fingers, location, posture, and network connectivity. Mobile context also includes various capabilities like automatic orientation detection, dynamic location detection, the compass, and accelerometers. Let us take the example of mobile context with respect to location. Say you ask your application to display a list of Chinese restaurants. A good application will locate your geographical positioning and give you a list of nearby places, along with reviews of other users in the same geography, and specific eating options depending on the time of the day. Results should be targeted, contextual, and valuable.

Another focus area is the various supported device models for the application. An application may work fine on one device but then crash or fail on another. For example, Zomato is a restaurant guide app with great features and UI. However, the app crashes on Android 4.3. A tester has a prime responsibility in verifying these nuances, keeping an optimized test matrix in mind.

Next up are data entry methods. We understand that mobile devices, especially smart devices, support a lot of data entry methods, including a stylus, touch, voice recognition, gestures, and so on. Test engineers must ensure that the application responds well for each potential entry point.

Multimodality combines voice and touch as input and displays visual screens and spoken responses as output—for example, a navigation application that provides spoken responses as audio aids for the driver and visual displays as directions. Testers need to examine input and output to be sure both audio and visual components function properly.

Taking into account the many variables that need to be considered from the mobile usability angle, the following practices come in handy when creating a testing strategy.

Start early. Testing should begin at the requirements analysis phase. Most usability issues are related to design, and if you can catch them up front when you review the designs as wireframes or mockups, it will mean less trouble down the road.

Work iteratively. Testing regularly makes it a lot easier to support small incremental changes throughout the lifespan of the application. Usability should be considered in all the stages throughout the development lifecycle, because every change in design and every interaction with the product prototype has an impact.

Use usability in conjunction with other testing types. You need to think about combinatorial elements in usability testing. For example, functionality and usability can cause a conflict; a functional designer might want to make the product feature-rich, whereas the usability engineer’s focus is to keep the application simple and intuitive. Usability studies need to be performed collaboratively, keeping in mind the performance, security, localization, functionality, and accessibility aspects of the application.

Check in after launch. Usability studies should continue after the product launches. Checking the app store, studying the ratings and reviews by users, and analyzing competition are all valuable practices in enhancing the usability of the application.

Use productivity tools. Usability testing for mobile apps is still largely done manually, and there is room to use productivity tools to make the effort more effective. There are many handy productivity tools available for a variety of usability factors, including testing the application across multiple devices for optimum screen resolution. At the end you get a report with screenshots for each selected device, indicating the screen resolution effectiveness. Another tool creates heat maps to help find areas where users’ focus lingers the most. These visible indicators help realign UI, if needed. And recording software shows where participants tapped and scrolled and how they navigated from page to page, helping gauge the level of workflow intuitiveness.

Go to the source to discover user expectations. Reading about usability is one thing, but interacting with people who have special accessibily needs is invaluable. It opens your eyes to their requirements and reveals some big coverage gaps you may have.

Talk like a human, not a programmer. Emotional intelligence factors are gaining attention. When you talk about end-users, don’t talk about them as entities, but as real people with emotions. If you want to account for an emotional angle in your testing strategy and you don’t know where to start, usability is a good place to consider.

Testing, testing, testing. While it is good to have a well-etched strategy, it’s better to set aside time for exploratory testing. Most often, usability issues surface when you try operating your product in an exploratory way. Invite groups to come test your app and think of creative ways to maximize test coverage.

In essence, mobile usability goes a long way in enhancing end-user app acceptance. This industry is still evolving by the day, but the practices discussed above will give you a head start in building usable mobile applications.

User Comments

Nice points. Thanks for sharing.

Good start. I know space is limited, but I like to use Usablity checklists, shoot for ubiquitous configuration ability, and keep the UI/GUI “fun”.

Good article @ Mukesh Sharma

#UsabilityTesting is still a important part of #software #testing.

http://bit.ly/1MQ5AQK

Good article @Mukesh.. Yes, customer-centric mobile usability testing measures the user’s ease of handling and experiencing the product/portal.. Thanks for sharing!

Good Article @Mukesh

Mobile app user interface must be simple to make app very popular. Top app Development Company always prefer this while app development.

Nice article related to testing usability of mobile apps. As we see the drastic growth in the daily development of different mobile apps, which can be related to Android or iOS, mobile app testing through automation is in great demand. Mobile test automation provides various test solutions, which help you to verify and validate the overall functioning of the mobile apps. Some of the most preferred tools used for testing usability of mobile apps are Appium, TestingWhiz, Robotium, etc.

excelent usefull context

Very nice article.

Loved the way you have mentioned.

Nothing is more frustrating to mobile users than not having an app work on their specific model of phone. One of the main issues that users have when using mobile apps, particularity m-commerce ones, is poor navigation. This means that while they are using the app, they have difficulty finding what they are looking for, and have to navigate too long to find it.

A mobile app’s success hinges on just one main thing: how users perceive it. The usability contributes directly to how a user feels about your app- whether negative or positive- as they consider the ease of use, the perception of the value, utility, and efficiency of the overall experience.

Regards

Mukesh Sharma, I really appreciate you for this informative article. As we all know technology grow day by day and demand of mobile application development increases proportionally. To get better outcomes from mobile application a good QA is important. Mobile test automation provides various test solutions, which helps you to analyze and track real time status of mobile applications. Some highly preferred tools used for testing the usability of mobile apps are Userlytics, Applause, Swrve etc.

Pages