5 Ways to Optimize Tests for Continuous Integration

article“Test early, test often.” If you’ve worked with me, you are probably sick of hearing me say that. But it’s common sense that if your tests find a problem shortly after that problem was created, it will be easier to fix. This is one of the principles that makes continuous integration a very powerful strategy.

Many times I’ve seen teams that have many automated tests but are not using those tests as part of a continuous integration program. There are often several reasons why the team thinks it cannot use these tests with continuous integration. Maybe the tests take too long to execute, or they are not reliable enough to give accurate results themselves and it will take humans to interpret the results.

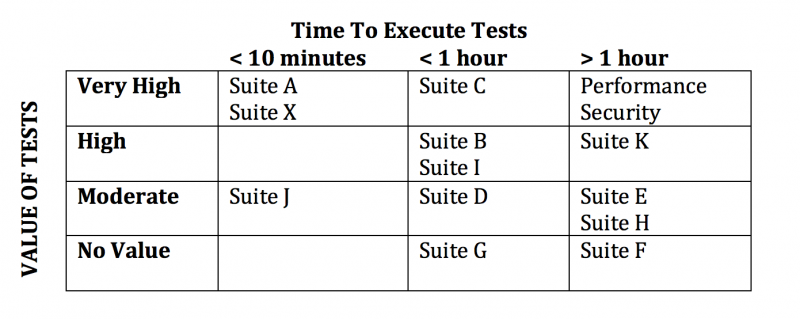

When assessing these suites, I start with a simple exercise. First, I draw two axes on a white board. The vertical axis shows the value of the tests and the horizontal axis shows the time it takes the suite to execute.

The team and I then write down the name of each test suite on a sticky note and place them on the appropriate place on the board. The chart below shows an example of a grid, showing how each test suite measures.

When we talk about the value of the tests, it’s based on the team’s subjective opinion, so we keep the choices pretty simple: No Value, Moderate Value, High Value, and Very High Value. We base this opinion on the reliability of the tests, or the tests’ ability to give accurate results each time they are executed, and the level of confidence the tests give the team for the quality of the system.

For example, some test suites are a must-have when it comes to making a decision, but the results are a little flaky, and when they fail sometimes for no apparent reason, a person has to re-execute those failing tests manually. We might still call this test suite High Value, but if it ran accurately every time, we would call it Very High Value.

On the other side of the coin, sometimes there is a test suite that gets executed because it’s part of a checklist, but no one really knows what the results mean. Maybe the original author has left the team and no one picked up the ownership of that suite. We would put that suite in the No Value category.

The horizontal axis is easier to determine: It’s simply the number of minutes that it takes to execute that suite.

Now that you have an assessment of each suite, it’s time to think about improving your suites by making them more valuable (moving up in the diagram) or by helping them execute faster (moving to the left).

For continuous integration, I like to target the tests into four categories:

- Tests with Very High Value that execute in ten minutes or less

These tests can run with every build. These are used to accept the build for further testing; the team should consider the build failed until these tests pass. Your developers will not want to wait more than ten minutes to get the build results.

- Tests with High Value or better that execute in an hour or less

These tests can run continuously. For example, you might configure these tests to execute every hour and start again as soon as they are complete. If there isn’t a new build ready yet, you can wait until the next build completes.

- Tests with High Value or better that take longer than an hour to execute

These tests can run daily—or, usually, nightly, so that the results are available when the working day begins for your team.

- Tests with Moderate Value

These tests can run once per week or once per release cycle.

Notice that I did not include tests with No Value. These should be improved to add value, or just dropped from your execution. It doesn’t make sense to keep test suites that don’t add value.

I chose the time boundaries of ten minutes and one hour based on input from the development teams. They want to get quick feedback. You can imagine a developer waiting for the build results to complete successfully before going to lunch. Your timelines may vary based on your realities; this is just a framework to show the thought process behind selecting the tests that run with the build versus hourly.

A huge benefit for executing the tests this frequently is that you are likely to have very few code changes between a successful test run and a failed test run, making it easier to isolate the change that caused the test to fail.

There are several strategies that have been useful for optimizing existing tests for the continuous integration suites. Here are five proven practices.

1. Create tiny but valuable test suites

Choose the most important tests and pull them into a smaller suite that runs faster. These are usually very gross-level tests, but they’re necessary to qualify your system or app for further testing. If these tests don’t pass, it doesn’t make sense to proceed.

A good starting point would be to create a new entity and perform the most important operation on that entity. For example, if it’s a note-taking app, the test would be to create a note, add text, close the app, then reopen and verify that the text was saved. If your note-taking app can’t save a note, there isn’t much use in proceeding to other tests.

We often call these build acceptance tests or build verification tests. If you already have these suites, great; just make sure they execute quickly.

2. Refactor the test setup

Tests generally have a setup, then perform verification. For the note-taking example, to verify that the app opens with the previous text present, you first have to set up the test with the text. Examine how your tests are doing the test setup and see if there is a better way.

For example, one team had a suite of UI-driven tests that took a long time to execute and had many false failures due to timing issues and minor UI tweaks. We refactored that suite to perform the test setup via API commands and do the verification through the UI. This updated suite had the same functional coverage, but it executed 70 percent faster and had about half the false failures caused by UI changes.

3. Be smart with your wait times

We have all done it: A flaky test keeps failing because the back end didn’t respond in time or some resource is still loading, so we put a sleep statement in. We intended that to be a temporary workaround, but that was a year ago now.

Look for those dreaded sleep statements and see if you can replace them with a smarter wait statement that completes when the event happens, instead of a set period of time.

4. Trigger tests automatically

You may have several test suites that are normally initiated by a person during the test phase of a project. Often, it only takes a little shell scripting to be able to include these tests in the continuous integration suite.

Security and performance tests are two examples of types of tests that might be performed by a specialist who is not part of the standard test team, so those tests might not be configured for automatic execution. The other benefit of running these tests frequently is that the problems that are found are frequently difficult to fix, so if the problem is identified sooner, the team has more time to fix it. These tests are often classified as Very Valuable, but they take more than an hour to execute, so they are typically executed daily.

5. Run tests in parallel

Virtual machines and cloud computing services coupled with tools that help automatically set up environments and deploy your code make it much more affordable to run tests in parallel. Examine the test suites that take some time to execute and look for opportunities to run those tests in parallel.

On one team, we had a very vital test suite that contained five hundred test cases. This suite took several hours to run, so we didn’t execute it very often. It was a very broad-based test, touching many different features. We were able to break that suite up into about a dozen different suites that could run in parallel, so we could run the tests more frequently (nightly instead of weekly), and we could tell more quickly where any problems lay because the new suites were organized by feature.

Improving the value of your test suites and the time it takes to execute them can help you optimize your existing test suites to fit into a continuous integration program.

Lets Hang!