Raising the Bar with Test-Driven Development and Continuous Integration

articleA hallmark of truly agile teams is an unquenchable desire to continually find new and better ways of developing software. These teams believe that there really are no "best" practices, only practices which work better or worse for them. This line of thinking is even apparent in the first line of the agile manifesto stating "we are uncovering better ways of developing". Notice the progenitors of the manifesto didn't propose that they had discovered the best way, only better ways.

In this article I will explore two of the most widely accepted agile development practices, Test Driven Development and Continuous Integration, and question how these practices can be made better with continuous testing. I will examine their strengths, shortcomings, and how the ideas behind continuous testing can provide better and faster feedback on the health of a code base. While this article will illustrate with examples from the Infinitest tool, it will will also point out the other common continuous testing tools and categorize their underlying strategies as well. After reading this article you will be grounded in the ideas of continuous testing and ready to select a tool and apply this practice on your own projects.

Current State

Test-Driven Development (TDD) and Continuous Integration (CI) are popular and effective software development practices. In TDD developers only write production code in response to failing tests. This helps teams to focus on doing just enough analysis and design to implement the current highest priority feature. One of the benefits of TDD is that production code and tests provide rapid feedback on the quality of one another. The typical Red/Green/Refactor TDD cycle lasts around 5-10 minutes, and the developer usually manually runs the test that is driving the cycle between coding and refactoring iterations. This means that although the feedback loop is very quick, unintended side effects could break other tests and the developer might not receive this feedback until the entire test suite is run.

CI is a technique whereby an automated build for a project is run after check-in, at some pre-defined interval, or a combination of both. Because all the tests for a project are typically run at some interval the test coverage provided by this practice is complete and the development team will all be aware of any unintended breakages. Although CI offers more complete coverage then TDD, the time it takes to get feedback is much greater [AE1] with developers receiving results at best a few minutes after a set of changes is checked in.

Both TDD and CI are based upon the principle that feedback is good, and the quicker the feedback the better. With CI feedback is typically provided to developers no sooner than the moment of check-in. With TDD, feedback is provided for the tests manually run by the developer between every TDD cycle. And as previously mentioned, the quicker feedback of TDD is provided at the expense of more complete test coverage around any given change.

So the question to ask in the spirit of continuous improvement is, "How can we improve on these practices by continuing to reduce the feedback loop while increasing the relevant coverage of our tests?"

Continuous Testing

The practice of Continuous Testing (CT) aims to reduce the feedback loop of TDD to the moment of compilation while preserving the breadth of test coverage provided by CI.

Since the goal of CT is to provide rapid feedback for every change, and since changes could likely be happening rapidly as well, CT tools need to be smart about which tests to run in what order in response to any given change. This is because running all tests for a project might be quite lengthy. Although this would provide maximal coverage, it wouldn't provide sufficient rapid feedback to be any improvement over traditional CI. Different CT tools provide different mechanisms for handling this, as will be discussed later in this article. But for the moment, let's consider the approach taken by Infinitest, a Java CT tool.

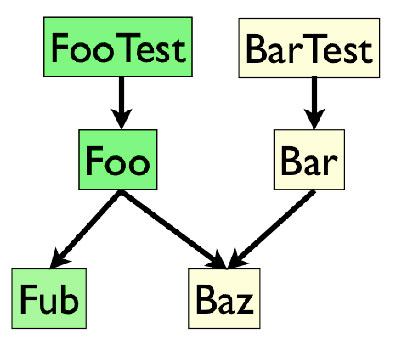

Infinitest uses a byte-code dependency analysis technique to identify the subset of tests most likely to be affected in response to any given change. For example, consider the related classes illustrated in Figure 1 below:

Figure 1. FooTest and BarTest each exercise a class under test which in turn depend on other classes in the system under test

For any given change Infinitest will traverse all nodes affected by the change and collect all the nodes which it can identify as being test cases. These tests are re-run by the infinitest test runner and the results displayed within the developer's IDE. In this case, changes to the Fub class cause the FooTest to be re-run.

In this manner Infinitest quickly identifies the smallest sub-set of tests that need to be re-run and displays the results immediately to the developer without interrupting the development workflow. Figure 2 shows how the Infinitest presents the results within the IntelliJ IDE.

Figure 2. Infinitest is continuously running tests affected by code changes in the background, providing developers timely feedback throughout the development process

Slow Tests

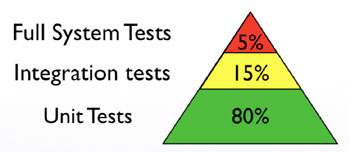

So if a CT tool is executing affected tests with every change, what happens with slow running tests, particularly if a developer is making frequent changes? First, it is helpful to clarify which kind of tests CT tools are most effective at running. Figure 3 illustrates one classification scheme for automated tests.

Figure 3. Well tested applications will usually have automated tests which run against the system under test at various levels of abstraction

CT tools don't know or care about whether your tests are unit or integration tests. Rather they work most effectively with fast tests regardless of classification as illustrated in Figure 4.

Figure 4. The tests from Figure 3 are reclassified as fast tests and slow tests with lighter shaded tests being slow running tests while darker shaded tests are fast running tests. Notice that tests are classified as fast or slow regardless of test type, i.e. some unit tests are slow while some system tests are fast

Infinitest in particular allows developers to exclude slow tests from the CT monitoring process through. In the future the Infinitest team hopes to automate the identification of slow running tests dynamically through the continuous testing process and exclude them from future test runs.

Because CT is not (yet at least) a complete replacement for CI, developers will still want to execute the project's build before checking in, and still include the full suite of tests in CI runs. While not replacing CI, CT gives developers as much feedback as rapidly as possible. Developers see the results of their changes as they are made rather then only when they run a manual build, or when check in their changes and the CI server runs one or more configurations of tests against the new state of the code base.

CT tools also facilitate rapid feedback by allowing the test preemption and prioritization. After a developer makes a change, affected tests will begin running in the background and feedback is presented in real-time to the developer who is free to continue developing. If the developer completes another change before the previous test run completes, it is preempted and a new test run kicked off. Also, Infinitest optimizes the feedback provided to the developer by running tests which previously failed before tests which were previously passing.

A TDD Episode with CT

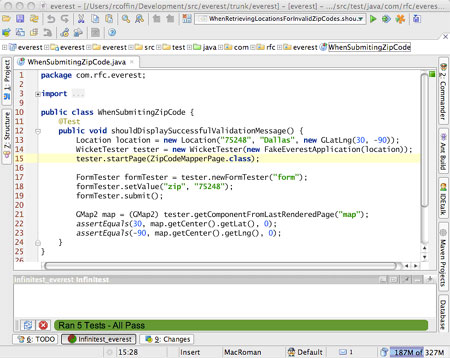

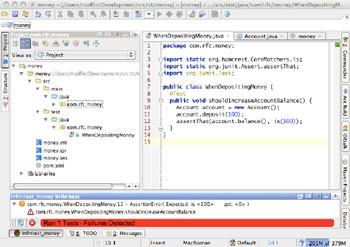

Let's see how CT complements TDD by walking through a very brief example. Let's say we're developing a very simple application to manage deposits and withdrawals to a checking account. Following the TDD process, the first step is to write a failing test that indicates the functionality we would like to add is not present yet in the system. As soon as the developer writes a failing test and enough code to make it compile, Infinitest presents the current failure directly within the IDE as shown in Figure 5. Notice that Infinitest quite visibly indicates that we are now in a failing state with a big red bar, and also shows the cause for the error.

Figure 5. Infinitest presenting an error immediately after compilation

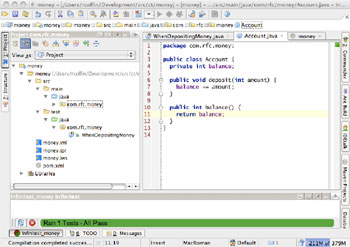

At this point in the TDD process the developer writes just enough code to make the test pass causing Infinitest to rerun affected tests and report that all tests are now passing as shown in Figure 6.

Figure 6. Infinitest reruns affected tests, and in this case shows that all tests are now passing

In this way Infinitest specifically, and CT tools in general, facilitate the TDD workflow by providing rapid Red/Green feedback without the developer having to shift focus and run tests manually. CT tools will also run more tests in each cycle than a developer, who typically only re-runs the current test which is driving the code changes.

CT Tools Overview

Although we used Infinitest to illustrate the idea of CT throughout this article, it is not the only tool of its kind. Table 1 below lists the various CT tools currently available.

Tool | Language | Test Selection Strategy |

Infinitest | Java | Dependency Analysis |

JUnit Max | Java | Runs all with prioritization |

Infintest Python | Python | Naming convention |

AutoTest | Ruby | Naming convention |

AutoTest | Rails | Convention, metadata |

CT-Eclipse | Java | Runs all with prioritization |

Table 1. Available CT toolss

Although the primary differentiator between these tools is the language they each support, they also vary in the strategy they employ to identify which tests need to be run in response to any given change. As illustrated earlier, Infinitest employs byte-code level dependency analysis to make this determination. Alternatives include analysis based on source files, conventions, project metadata, and the running of all tests in response to any change. These strategies are sometimes augmented with test prioritization to provide the most meaningful feedback fist.

An interesting technique has been added to the latest version of Clover (a code coverage tool) that allows Clover to execute only the tests that need to be run in response to any given set of changes based on code coverage metrics. Clover isn't a CT tool per se because it runs as a part of the build or with manually run unit tests from within your IDE, but the idea of using these types of metrics in test selection process is quite powerful and hopefully in the future we'll see CT tools offering this capability as well.

Conclusion

In this article we have seen how CT extends the practices of TDD and CI to offer the best possible feedback as quickly as possible throughout the development process. We have seen this illustrated with Infinitest and have seen a few other tools for other languages and with different strategies for test selection. My hope is that you come away with an interest in the practice and tooling around CT. I encourage you to pick up and try out one of the CT tools available and to contribute back to the CT community through mailing lists, reporting bugs, suggesting features, and possibly even developing new implementations for different languages and environments.

About the Author

Rod Coffin is a Principal Consultant at Improving Enterprises which provides training, consulting, and outsourcing services. He has over 10 years of professional software development experience across a wide variety of industries, technologies, and roles. He has coached and mentored several teams on agile software development and is equally passionate about the organizational and technical sides of effective software development. He is a frequent speaker at software conferences and has written many articles on a range of software development topics including agility, enterprise software development, and semantics. http://www.improvingenterprises.com/

Lets Hang!