Test Automation in the Agile World

articleAfter decades of talking about test automation, the agile movement suddenly seems to be taking it seriously. You might be wondering what all the buzz is about. Is this something software developers didn’t realize during the waterfall era? How did the waterfall teams survive without automation?

I’d like to talk about why test tooling is suddenly so critical, when teams should think of automating, and how to bring the change.

Why Do We Need Test Automation?

Test automation as a concept is independent of method. There were many waterfall teams that automated checking and evaluation of their test processes; the question is, why is it so popular now?

My personal view is that, apart from many things that came together in the last ten years, the main driver is the frequent release required by agile. Now that agile methodology has become widespread, teams need to fit the entire plan/build/test/deploy window into two weeks—and two months of test/fix/retest won’t cut it. Speed, accuracy, and quality became key factors driving agility in the organization.

When Should We Embrace Test Automation?

You should not adopt automation “just because” Amazon, Facebook, or some popular company is doing it. First, find out the need. You might ask, When there are benefits across the board with cost, speed, quality, and accuracy, why should we delay? Well, these benefits must be understood before introducing test automation.

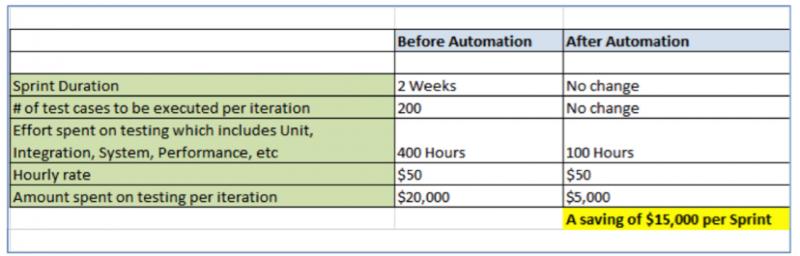

You might want to actually make a spreadsheet clearly quantifying the benefits. Here’s a sample spreadsheet to help you think about goals and possibilities with tools:

Don’t get carried away too much with the above numbers. Results vary for different teams and circumstances. In fact, I have come across situations where test automation could do more harm than good.

Consequently, you should quantify your process to enforce the idea of data-driven thinking and avoid emotional decisions. If you cannot quantify the benefits, don’t waste time.

How Should We Introduce Test Automation to the Team?

Every change initiative should start with building awareness about the change. In this case as well, test automation awareness training should be conducted. Other channels to build awareness include brown-bag sessions, inviting industry experts to speak, or getting involved in meet-up groups. At this point, setting up a coaching kata, as described by Mike Rother in his book Toyota Kata, is a good idea. Put simply, management goes from the high-level goals the team needs to accomplish to smaller, more manageable goals, and coaches the technical staff on how to accomplish each step.

Even though test automation is, of course, mostly related to testing, business analysts, developers, database administrators, and anyone else who works with testers should be involved in the awareness sessions, too. We are talking about the test process here, and in an agile environment, software testing is everyone’s responsibility.

Once the training is over, the team should be excited to get started. This is where you need to be a bit cautious about choosing the right set of work. Don’t try to automate everything. Choose a piece of work that is prone to break, and write it as a story. When this issue arises, it should be caught by an automated check. I have found that the best place to start is often with programs that touch multiple interfaces. Not only are these programs failure-prone, but a single run of the program could exercise and check several different potential failure points.

From a few of these specific checks, the team may begin to see a framework arise; that framework is also a story, or a piece of development work. On top of that automation infrastructure, the team may want to add continuous integration and visual radiators that indicate when new code has caused failures. They could be simple monitors connected to servers, with the monitors providing visual feedback about testing quality, performance, and time-related parameters.

In one of the organizations I worked in, the company invested heavily on a tool. However, it made the mistake of allowing the teams to learn on their own. After two years, 70 percent of the employees still didn’t know how to use the tool. The tool was used sparingly, and obviously, the benefits were never fully reaped. Appointing a single coach with the responsibility to teach the tool could have prevented this, or at least fostered learning. (Not all tools will work for all teams; the key is to learn and adapt what works in what environments.)

Brainstorm to Solve Problems

Automating the wrong test cases brings no benefit to the team or organization. Test tooling might be a good strategy for you, but if you are having problems explaining or selling the value, it might be because the kind of test tooling you are thinking of is not a great investment for the company—at least, not a great investment today, for the way things are currently imagined.

So conduct an experiment. Create a story. Ask the technical team what they want to do. Tackle the problem from several angles. Then, come back in a few months or a year and see how things are going.

User Comments

1 comments

80% of applications are repudiated due to problematic UI and non-functional front-ends. Moreover, functional issues and downtime further can create several challenges to deliver sophisticated web applications in continuous agile cycles. Test automation is the fastest and easiest way to identify performance bottlenecks in applications arising out of the UI and front-end errors and ensure continuous delivery in agile cycles.

For more details and better clarity, visit: http://bit.ly/1SBbyp1

Lets Hang!