Companies launching a new test automation initiative—and any salespeople involved in selling them the associated tools—tend to think that their success hinges upon a flawless rollout. As a test automation consultant, I’d like to provide a reality check based on what I see in the field. The initial rollout could be a rocky road if you’re unprepared, but over the long term, that’s not what’s going to make or break your test automation initiative.

I’ve recently witnessed several companies start to roll out test automation companywide with little to no preparation. The risk of taking this “strategy” is that testers are likely to resist test automation for a number of reasons:

- They feel that life was fine without it

- They don’t want to (or have time to) learn the new tooling

- They worry that it’s too complicated and the effort required to get up to speed outweighs the perceived benefits

A poorly planned—or totally unplanned—rollout obviously isn’t the best way to launch a test automation initiative. However, I’ve found that it’s always easier to recover from a rocky rollout than it is to achieve sustainable test automation without an appropriate test automation team structure in place.

I’d like to share some proven practices for a few different test automation adoption scenarios that have helped numerous organizations enjoy long-term success—even when the initial rollout wasn’t ideal.

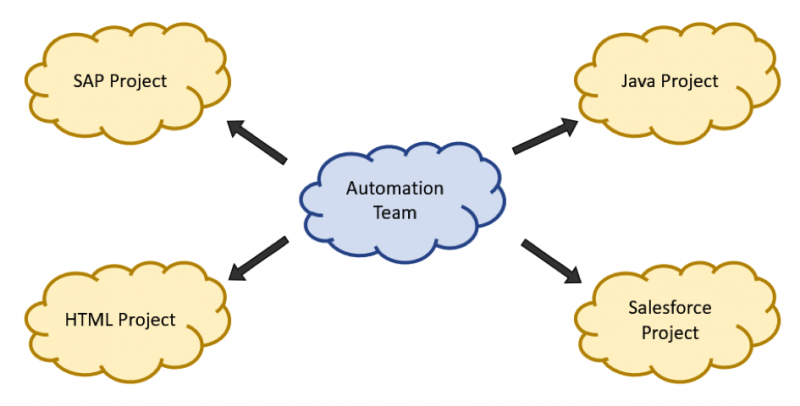

Automation Team Rolling Out Test Automation Project by Project

The best piece of advice I can give is to start with the small things, then go big. The proven first practice is to start by creating an automation team.

This team will iterate through the different projects and start automating the most important test cases. This lets you demonstrate progress on the initiative and gives you some insight into how application changes impact key application functionality.

I recommend starting with the most impactful test cases: the ones that cover your top business risks. This creates the foundation for a powerful regression portfolio that can provide fast feedback on whether application changes broke previously working functionality.

The automation team should include at least one test design specialist, who starts by reviewing the requirements and using test case design approaches to map out the test cases that should be automated. Then, the test design specialist or one of the two or three automation specialists can evaluate if test data management (TDM) is needed, and they can collaborate on defining the test cases. Meanwhile, the test design specialist will take care of the next project.

Depending on the software you want to test and how much you have customized it, an automation engineer could be required. They will ensure that custom controls are created and later maintained.

The size of the team will increase over time, depending on the number of test cases and projects the team has to handle. These suggestions are only for the initial rollout. As soon as the rollout starts progressing, you’ll want to start recruiting more people as soon as possible.

Once the test cases are automated, they can run on test virtual machines overnight, then report execution results on a daily basis. The most important thing for getting test automation up and running is to actually run test cases! If you’re not running your tests, you’re not receiving any value from them, no matter how well-designed your test suite is and how well it covers your top business risks.

Depending on your company structure (agile or not, testing teams or independent testers), the automation team may take the lead on educating everyone about the overall automation best practices to apply and the project variations they should consider as they start their own test automation.

Before testers start applying test automation, a decision must be made: Who is responsible for the test cases? This is critical. If the automation team (or maybe later the regression team) is responsible, then they have to communicate much more with the testers and the business side. If it’s the testers’ responsibility, they need enough time to ensure all test cases are running and meet business expectations. Each organization needs to decide what’s best for them, depending on their structure and working style.

Regardless of which direction you take, be sure to save enough time for communication. On one hand, new test cases need to be reviewed by the regression team and then set up for execution. On the other hand, the regression test results (especially failed test cases) need to be shared so that responsible team members or testers can review them and update the tests if needed. At a minimum, ensure that testers independently check regression results and make the appropriate adjustments so that the regression team can pull new test cases from a shared folder and review them on their own.

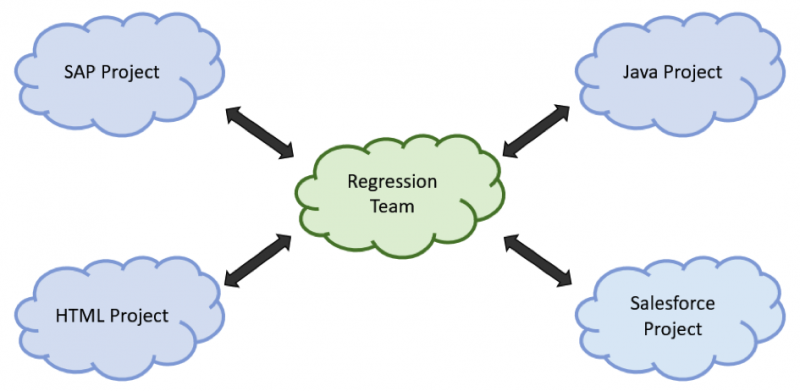

Regression Team Leading and Mentoring Testers on Each Project

The most effective setup I’ve seen is to establish an initial automation team that evolves into a regression team once the test automation foundation is established and others are prepared to drive it forward.

The regression team remains responsible for creating test cases in initial projects, maintaining existing test cases, running test cases, guarding the testing infrastructure, and overseeing the broader test automation setup and project. You could call this team the test center of excellence.

With this setup, it’s possible to have projects and bigger changes steered through test design specialists, who will take care of the right format and provide an overview of what it should look like. They are like software architects (or, in our case, test architects).

The regression team is the “go-to group” if you have questions regarding problems or you want to drive new innovations. The ownership and responsibility should be the regression team’s because they have the best overview of the test portfolio.

From a resource view, you need highly skilled people in the regression team, but you could get away with less experienced team members as testers. If the testers need more knowledge than the trainings provide, they can always ask the regression team, who can then help by sharing the knowledge they have built up.

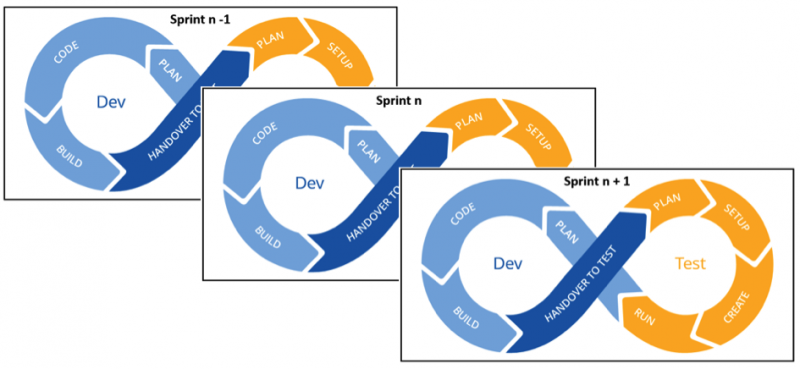

Adaptions for Agile Environments

For agile environments, test automation is driven by an agile tester within the team. This person should be more advanced than an automation specialist—they need to understand test case design, requirements, test case creation and execution, and core testing best practices, and they need a good understanding of what to test and how to test it effectively.

I recommend a continuous setup for development and testing from sprint to sprint:

When development finishes with the feature of Sprint n-1, testing starts. Meanwhile, development can continue with feature 2 (Sprint n), and so on. Just be sure to reserve time for fixing bugs and further improvements.

Do We Separate Development and Testing?

Finally, I want to add my two cents to a couple of questions that come up frequently when talking about structure: Should our developers test, or should we separate development and testing? Should we use software development engineers in test (SDETs)?

Theoretically, you could save time and money by having developers test too because developers know the code the best. But let’s be honest: Dev never has enough time to complete all the development tasks that everyone wants completed. If they’re also responsible for test automation, that means they have even less time to complete those tasks. And even if developers know the code the best, are you sure they’re checking edge conditions? Anticipating the risks and trying to ask the right questions? Probing the system and pushing it to a failure?

My opinion is that someone who didn’t write the code is better suited for this. These other people are focused on achieving realistic business goals through the application and trying to think of every steering possibility that could lead to an error in the system. From what I’ve seen in the field, that’s not always a developer strength.

Turning the Tables

All these suggestions are based on my personal experiences helping users adopt test automation. I know every reader probably has a different perspective on this—and I encourage you to share your thoughts about it below. What helped you most? How did you set up test automation in your company? What challenges did you face?