At regular intervals, the team reflects on how to become more effective, then tunes and adjusts its behavior accordingly. (Agile Manifesto principle 12)

A cornerstone of agility is the team's commitment to continued reflection and adaptation. For most teams, there is a natural cadence to this process as iterations occur frequently, typically every one to two weeks apart, and releases occur every two to four months. As such, the iteration boundary represents a frequent opportunity for immediate feedback and quick mid-course correction, primarily focused on the team's ability to simultaneously define, code and test new functionality in a time box. At the release boundary, the measures move to those things that reflect the team and the organizations ability to move that functionality from "inventory" to delivered product or system. In this article, we'll describe a set of iteration and release metrics that have been used to good effect by a number of agile teams. In our experience, teams that are effective in using these iteration and release metrics have the best chance to achieve the maximum benefits of agile.

Iteration Retrospective

The iteration retrospective provides the first tactical opportunity for assessment and process improvement. Indeed, for most teams new to agile, this is a key learning opportunity and most likely it will indicate the main reasons that the team has been unable to accomplish the objectives of the iteration in the time allowed. After all, team members are new to agile and much is different; it unreasonable to expect that the first few iterations be winners. This quick feedback loop is yet another reason that shorter iterations produce better results. The shorter the iteration length, the quicker the feedback and the more successful the following iterations are likely to be!

Format of the Iteration Retrospective

Iteration retrospectives are time boxed to an hour or less, {sidebar id=1} and most agile teams proceed along two distinct paths. The first, a quantitative assessment, establishes the key metrics for the iteration. The second, a qualitative assessment, determines "what went well" and "what didn't."

Quantitative Assessment

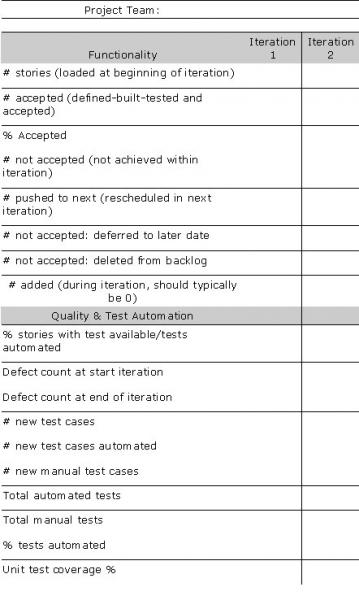

The quantitative assessment section proceeds via gathering and reporting the metrics for the iteration. Table 1 provides a set of metrics initially developed by a project team building wireless infrastructure software, and that we have later successfully applied in a number of diverse software project contexts. Each row in the table indicates a measure the team determined that was important to their process. The columns measure their progress over time.

Project Team: |

| |||

|

|

|

| |

Table 1: Sample iteration assessment metrics

By reviewing these metrics, teams can gain a number of real insights into of the types of measurements that are important to this agile team.

Functionality/Story Achievement

The first eight rows all measure the same thing: how many stories (which could be any of user stories, use cases, use case scenarios, jobs, defect suites, etc) did the team complete compared to plan? It is not unusual to see this vary from 30-40% to as much as 100%, with a range of 50-80% being typical.[1] In addition, there is an interesting "root cause analysis" implied by the other rows in this section which answers the question: "for the stories that we did not complete, what happened and where did they go?"(rescheduled, deferred, deleted?)

Quality and Other Key Process Indicators

The second section of this table provides additional insights. In this section, this team appears to be tracking four important things:

- Ability to test new stories within the iteration. The team is measuring its ability to test each new story within the iteration boundary, a key aspect of agile progress.

- Defects. Yes, defects still exist with agile, the difference being that the numbers are far smaller and they are addressed on a continuous basis, rather than via an all-hands-on-deck-lumpy-triage towards the end of the release cycle.

- Progress on test automation. Clearly, this team understands that its ability to keep up with test automation is a gating factor in agility and it is tracking the rate of progress at which it is reducing the backlog of manual tests

- Unit test coverage. Another key indicator, or better a key forecaster of ultimate quality, is percentage of code covered by unit tests of one sort or the other. For most teams, 60-70% unit test coverage is goodness.

These iteration metrics, along with their trends over time will, provide an ongoing indicator of the team's real progress.

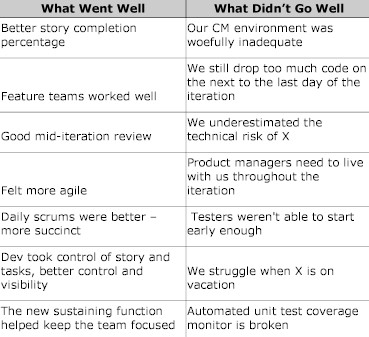

Qualitative Assessment

Once the quantitative assessment is done, the next portion of the meeting is conducted typically by answering and answering two key questions of the team.

- What did we do well on this iteration?

- What did not go so well on this iteration?

Table 2 below provides an example of some of the types of feedback teams provide on these two key questions.

Table 2: Examples of qualitative team feedback on an iteration