Deliverable-oriented project management and test-driven development can be combined to provide an objective and easily understandable way of measuring project progress for the client, team members, and management. In this article, John Ferguson Smart presents a case study of how this approach was made to work.

Test-based Project Progress Reporting

articleIntroduction

This article presents a practical study of how we use deliverable-oriented project management and test-driven development as an objective and easily understandable way of measuring project progress for the client, team members, and upper management.

Defining deliverables

All projects, by definition, have deliverables. In an iterative approach, the main deliverable (the application) can be divided into smaller deliverables (e.g., modules, functions, or user story implementations), in order to define an iterative, milestone-based delivery schedule.

The WBS and the project plan

A work breakdown structure (WBS) is a well-known and extremely useful tool for breaking a project into easily manageable--some would say, "chewable"--tasks. At a certain level, you assign WBS tasks to individual team members, or, in some special cases, a small group of team members, and expect them to produce a "concrete" deliverable.

Work breakdown structures tend to be intimately linked to project plans. We use an MS Project plan for global project planning. Here, as in the WBS, work is detailed down until each task corresponds to a deliverable item and is assigned to a team member. Assigning tangible deliverables to each team member helps focus the development activity with concrete, short-term goals, and also helps in obtaining developer buy-in and responsibility.

Test cases

Naturally, we wrote a set of test cases for each deliverable. The test cases represent the acceptance criteria for each module. There are many ways of doing test plans and test cases. Most will contain, in one form or another, a list of actions or steps to be performed, accompanied by the expected result. In our case, for each deliverable module, we put the corresponding test cases in a separate Excel spreadsheet, along with some extra information for ease-of-use:

- A unique test case number

- The screen ID

- The screen zone or area

- Action to be performed

- Expected results

- Obtained results:

*Result: passed, failed, not tested

*Description of any undesired behavior

*Related defect tracking issue(s)

In our experience, a good set of test cases can give an excellent indication of the production readiness of a deliverable. Ideally, test cases are handed to the developer along with the functional specifications, though in practice they often come a little later. The analysis documents and the test cases provide concrete, tangible objectives for each module and keep developers focused on code with real added value for the end-user.

Measuring progress using tests

Measuring test results

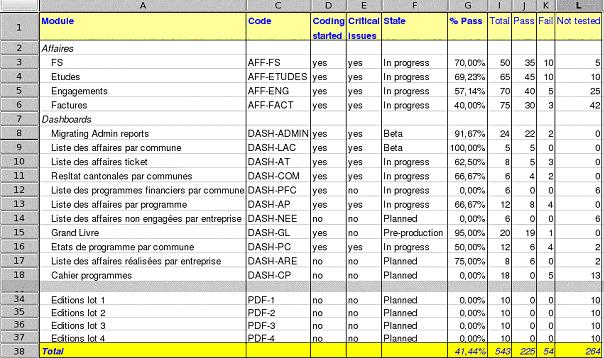

Test-based progress reports add an easily understandable, objective view on project progress. In our current project, the main test status report summarizes the following for each module:

- Total test cases

- Passed tests

- Failed tests

- Untested tests

We base our metrics on three main considerations:

- A module is considered finished when all test cases have been successfully run by QA. In our case, QA includes internal testing teams and client testers.

- The number of test cases needed to test a module approximately reflects its complexity. While this is not always true, we find it as good a measurement as any other.

- The development is iterative: New versions are delivered frequently and testing is done continually, not just at the end of the project.

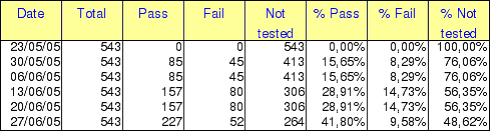

In these conditions, overall progress on the different modules may be obtained by measuring the relative number of successful test cases for each module. If you can obtain reliable data on the number of test cases passed, failed, and untested at a given point in time, it is fairly easy to put them into a spreadsheet as shown in Figure 1.

Figure 1: Test status follow-up spreadsheet

Module progress status

I never trust a developer who says that a module is finished. In my book, a module is finished when all the test cases pass, period. However, it is generally accepted wisdom to say that a program is ready for beta testing when approximately 85 percent of the test cases pass. And, although you theoretically need a 100 percent success rate for a module to be production-ready, our client will generally accept going to production with a small number of non-critical issues which can be fixed at a later date. So we also defined a "pre-production" state for modules with at least a 95-percent success rate and no critical issues.

Finally, I find it's motivating for the troops to distinguish modules on which coding has begun from truly new modules.

We distinguish five states representing five development stages, which are objectively measured by the number (or percentage) of test cases which pass:

- Planned: coding hasn't started yet.

- In progress: coding has started.

- Beta: 85 percent of the test cases pass.

- Pre-production: 95 percent of the test cases pass, and there are no critical open issues.

- Production-ready: 100 percent of the test cases pass.

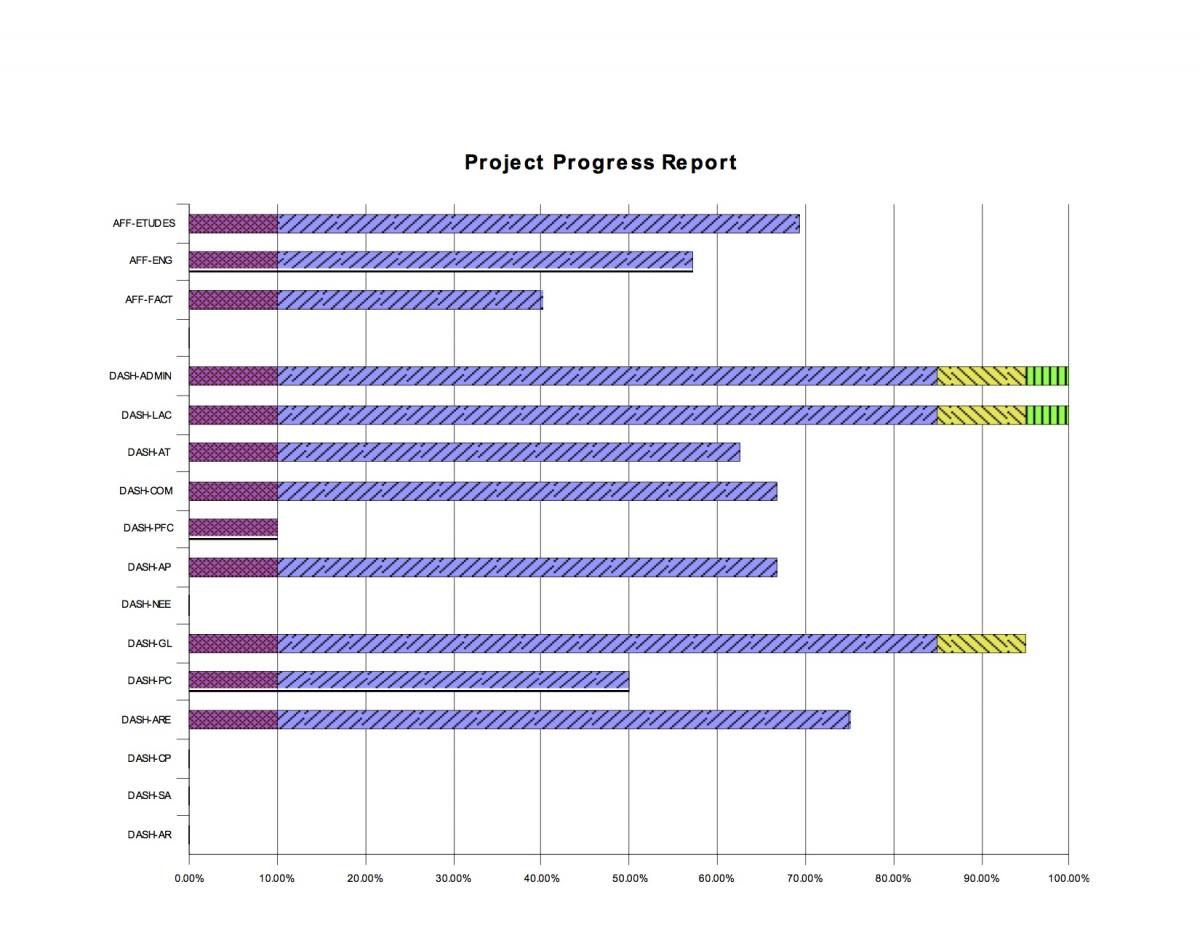

Once you have the percentage of passing test cases, you get a pretty good idea of module progress and stability. We present this data graphically in weekly progress reports via graphs, such as the one in Figure 2.

Figure 2: A typical project progress report showing progress per module based on test results

An arbitrary 10-percent, violet bar (e.g., the eighth bar down in Figure 2) is used to indicate that work has started on a module. This is primarily to encourage developers and to give sponsors a clearer idea of work in progress.

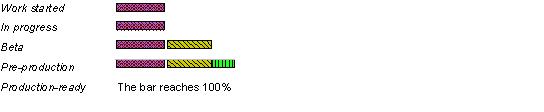

The progress of each module can be followed at a glance, using a color-coded schema:

Figure 3: The work progress color coding

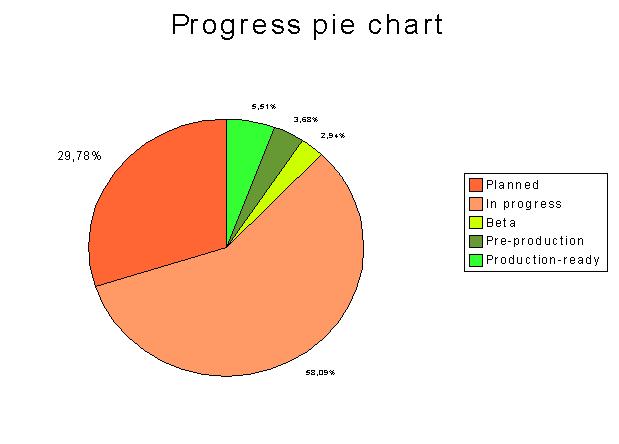

Test-based progress overview

We get a high-level overview of project progress by representing, in terms of number of test cases, the relative weight of modules in each state (see Figure 4). This graph is easy to understand for an outsider and particularly useful for an executive summary chapter in a progress report.

Figure 4: Test-based progress overview

Figure 4: Test-based progress overview

Defect data

The iterative development cycle we use is provides a convenient basis for tracking defect data We try to target client-deliverable versions every one to two weeks, and an internal version on a weekly basis or sometimes every few days. The regularity of new versions is more important than the number of new modules or bug fixes in each new version. However, QA personnel do like to test the same version for a reasonable length of time before receiving a new one. Delivery target dates are decided together. Before each delivery target date, we decide whether a delivery is feasible (presence of critical issues), and what new modules (and bug fixes) can be announced to the client.

To do this, we use defect data taken from the defect database to measure product quality and reliability. Overall-defect-status graphs show the number of defects for each defect status (open, to-be-deployed, pending validation, etc.).

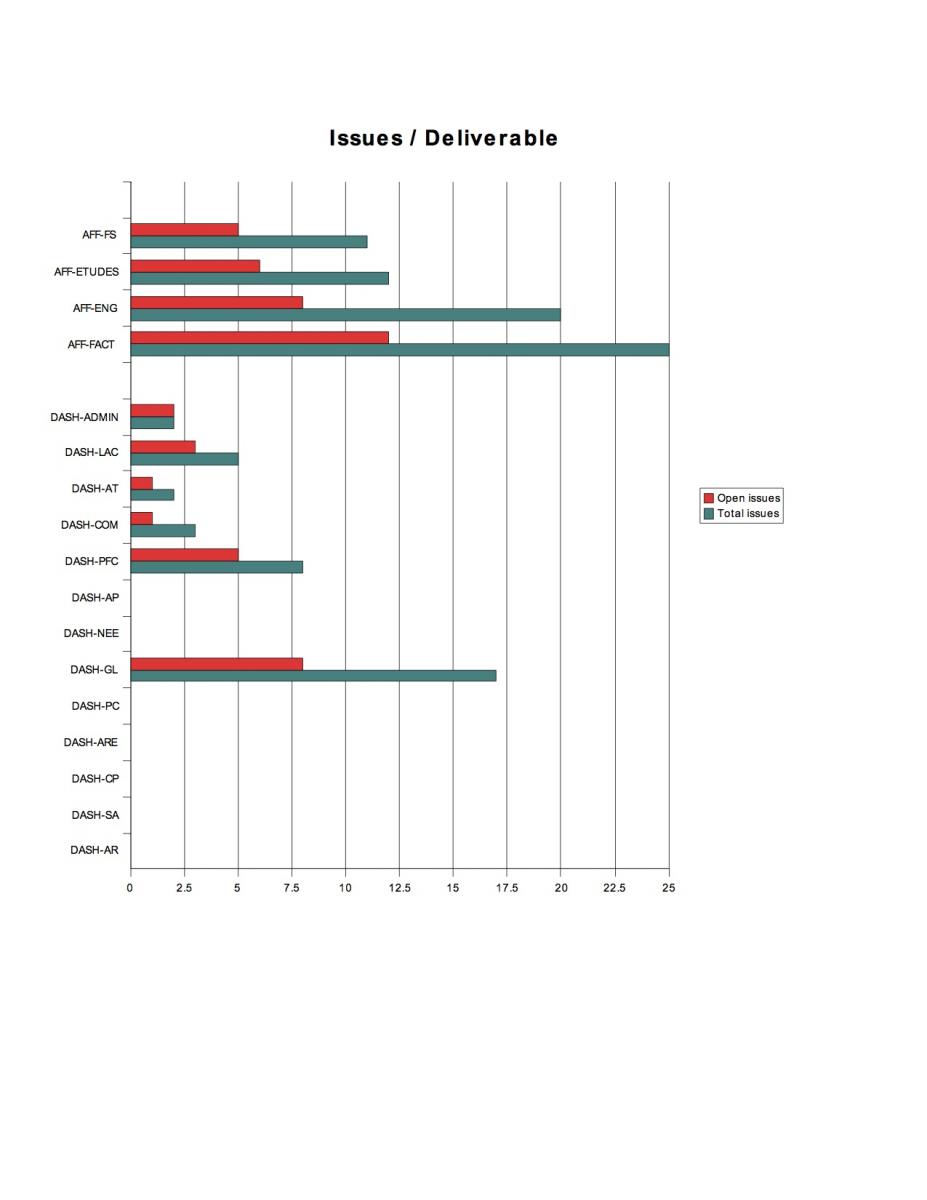

We also measure defect status--recording the number of open issues and total issues--for each deliverable. This is important for delivery scheduling:

- The number of open issues gives an idea of the current stability of a given module. Is a module presentable to the client in the next iteration?

- The number of total issues gives an idea of the number of total defects found in a given module. I generally find that modules with a record of having a lot of issues in the past are more likely to cause problems in the future (given equal testing), and in extreme cases may need refactoring or rewriting.

We find it helpful to present this data in graphical form, as shown in Figure 5. Sponsors and upper management will get an overview of the bigger picture, whereas team members will be able to compare their results with those of their fellow team members.

Figure 5: A typical graph showing the number of issues found by deliverable

Historical data

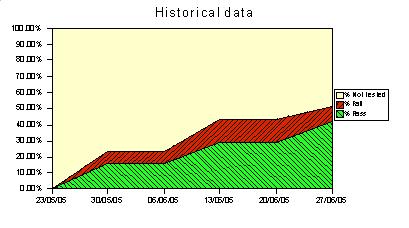

It's also important to keep track of test results over time. This gives you a historical view of how fast deliverables are being delivered and stabilized. We record total test results (passed, failed, not tested) on a weekly basis (see Figure 6), and present the results in graphical form (see Figure 7).

Figure 6: Historical data: test completion over time

Figure 7: Historical data: test completion over time

What this approach does not do

This approach can complete--but certainly does not replace--traditional project-progress tracking and reporting. In particular, this approach is purely deliverable-oriented in that it blissfully ignores all notions of delay, costs, resource consumption, critical paths, and so on. These notions can and should be managed using other techniques such as Gantt diagrams, PERT diagrams, earned-value, and project management tools such as MS Project. Indeed, it is important to give upper management, team members, and the project sponsor as complete a view of project progress as possible. Test-based deliverable status is an important and easily understandable facet of project reporting, but delay, cost, and task-oriented views are equally as important.

Conclusion

Often, project progress is uniquely measured using a project plan based on estimations of the remaining work for each unfinished task. In our latest project, we found that test results at a relatively low level could be used to give another, more objective view of project progress. This type of reporting also helps developers focus on tangible results and deliverable-oriented work.

Lets Hang!