Teaching Acceptance Test-Driven Development

articleAcceptance test-driven development, or ATDD, is a whole-delivery cycle method that allows the entire team to define system behavior in specific terms before coding begins. It is especially useful for batch applications, where there is a clear input, a transformation, and some sort of output. In that case, the idea of a series of tests expressed in a table makes a lot of sense. (Behavior-driven development, or BDD, also allows tables; I find it more useful for applications that have a user-defined flow.)

Here’s how we teach ATDD at my tiny company.

First, let’s start with a simple example: Fahrenheit to Celsius temperature conversion.

The formula is:

C = (F − 32) * 5/9

It is easy to implement “FtoC” as a code library function that takes a Fahrenheit value and returns a Celsius. From there, we can provide a command-line utility that takes a Fahrenheit value and puts the answers on the console or screen. Next, we create a test utility that takes a comma-separated values file and loops through it, running the program over the first column (input) and comparing the output to the second (expected result), tallying the number of passes and fails.

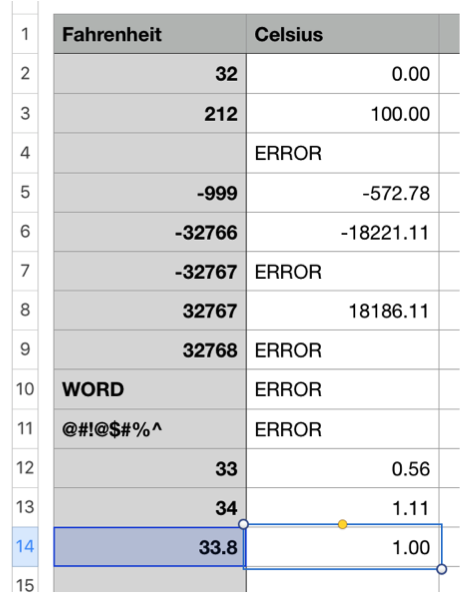

To create the CSV file, we just create an Excel file that looks like this:

Row 14 is interesting: It introduces the idea of fractional inputs. I’d say odds are about fifty-fifty that the programmer takes the input as an integer, truncating 33.8 to 33. Having these tests defined prevents that problem.

In the first round of the exercise, we have separate tester and programmer roles. The testers create the tests, the programmers write the code, and then we run the tests over the code and see how many pass.

Most of the time there are a lot of failures, which lead to silly arguments about what the code should do—after the code has already been created.

From there, we start over, now with tester, programmer, and product owner roles. This time the group meets to create the examples collaboratively, then works together on the code, while the testers think up still more examples and one-time-use tests.

In this second round, you can almost see the test-report-fix cycles disappear.

This is putting the “Three Amigos” strategy to real use: The three roles of tester, programmer, and product owner provide different, insightful viewpoints to anything you build. Our ATDD students don’t just read about the strategy on a PowerPoint slide; they have created the same program with both methods and learned which is superior.

Students who run through the exercise only need one laptop for every few people. And in a group of three to five people, only one needs to be able to write code.

The simplest implement of the code is a single line:

print (f-32)*5/9

But any serious work will result in more like a dozen lines of code. That helps the programmer understand hidden complexity, as well as what testers actually do. This is also easy to implement in any programming language.

When I’m running the class, I try to use a language different from the one the programmers use every day in order to keep things interesting for them. Testers get to have fun finding the hidden exceptions, while product people get immersed in a development experience that is just realistic enough but not scary. Meanwhile, the entire development feedback cycle is compressed into fifteen-minute sprints.

Plus, of course, the exercise is tactile; the team experiences ATDD by doing it. We need more test training like that.

But there’s a problem: Without something exciting, students are likely to miss the point of the exercise once they start sleepwalking through the details. They need something to wake them up.

Before I have people break out laptops and go into Excel, we first run an ATDD simulation on easel boards with colored markers. Borrowed from the work of Adam Yuret, we ask people to plan part of robbing a bank: entrance and departure. Instead of user stories, we frame these aspects in terms of tests.

At a certain time, we execute a time hack, people enter the bank a set time apart, and they arrive from such a place. Making these tests means they can be evaluated as true or false. For example, let’s say the getaway car needs to pull up between 2:57 and 3:03 p.m. Exactly 3:00 p.m. is either impossible to measure (how good is good enough?) or will always fail.

Once students understand the dynamics, we walk them around the room to look at everyone else’s plans, then redo the exercise. Often, teams get incredible ideas. We do the same thing when it comes to the second exercise. In that case, the walkaround shows that even with an identical specification, there are many implementations that can vary in behavior quite a bit, yet they all might be “good enough.”

What ATDD does is align the expectations of the testers, developers, and product owners, forcing the silly argument to happen before the code is created, so no one has to create the code twice.

And that is a quick summary of how we teach ATDD. What would you like to know, or how do you teach acceptance testing at your company?

Community Sponsor

User Comments

5 comments

Some interesting hidden assumptions.

- Why do some apparently valid numeric inputs result in an error?

- Should inputs lower than absolute zero result in an error?

- Should such an error be different from errors from non-numeric inputs?

But that's exactly the point, finding these as errors (or not!) uncovers hidden assumptions, which from the product owner are unstated requirements (assuming that "everyone knows that").

thanks, Ken.

Yes, that was the whole point. Done separately, there are hidden assumptions. Worked out collaboratively, those tests express the explicit shared intent of the system, designed up-front to prevent a silly argument later. :-)

As a lifelong tester I strongly advocate against any kind of test driven design and acceptance testing is even worse.

In general this leads to repairing symptoms instead of design and implementation defects. It is important to remember that testing cannot determine whether a product is correct, only that it is not correct. Tests should be designed to detect what is incorrect, not what is correct. To detect what the software developer didn't think of, not what he or she did think of.

Acceptance testing is, in general, a series of tests that demonstrates to a customer an acceptable level of operability. These are certainly things that must be tested, but my experience is that when an acceptance test fails, the company offering the product negotiates a settlement so they can get paid (we called it "an engineer in a box". Think product configuration management.)

And what about non-functional requirements? Security, maintainability, ... the list goes on.

I can agree with you that ATDD is not /sufficient/ to call the software 'done.' What it is good for is improving first time quality and eliminating rediculous and silly arguments about what the software /could/ do held after it was already built.

Here's a piece I wrote exploring the same questions from a different angle, eleven years ago:

http://www.drdobbs.com/are-tests-requirements/199600262

THAT article asks it ATDD-style checks are requirements. I said no.

You could also ask 'Are ATDD-style checks human curious tests?' I'd suggest that is no as well.

Man that FToC is a great example ... :-)

Lets Hang!