At first this question bothered me. It doesn’t matter how your competitors are doing at agile, I reasoned. If you’re not perfect yet, keep improving. It took me awhile, but I eventually realized the flaws in my thinking. A business does not need to be perfect; it needs only to be better than (and stay ahead of) its competition. Google is the dominant search engine today not because the results it shows are perfect but because its results are usually better than those shown by its competition.

This means that agile does not need a five-level maturity model similar to CMMI. Organizations are not striving for perfection against some idealized list of agile principles and practices. Rather, they are trying to be more agile than their competitors. This does not mean that becoming agile is itself the goal. Producing better products than the competition remains the goal. But being more agile than one’s competitors is indicative of the organization’s ability to deliver better products more quickly and cheaply.

With this in mind, Kenny Rubin, Laurie Williams and I created the Comparative Agility assessment (CA), which is available for free online. Like the Shodan Adherence Survey and Agile:EF, a CA assessment can be based on individual responses to survey questions. However, it was also designed to be completed by an experienced ScrumMaster, coach, or consultant on behalf of a team or company based on interviews or observation.

Survey responses for the organization are aggregated and may then be compared against the entire CA database. Responses can also be compared to a subset of the database. You can, for example, choose to compare your team to all other companies doing web development, all companies that are about six months into their agile adoption efforts, all companies in a specific industry, or a combination of such factors. You can also compare your team against its own data from a prior period, allowing you what improvements have been made since then.

At the highest level, the CA approach assesses agility on seven dimensions:

- Teamwork

- Requirements

- Planning

- Technical practices

- Quality

- Culture

- Knowledge creation

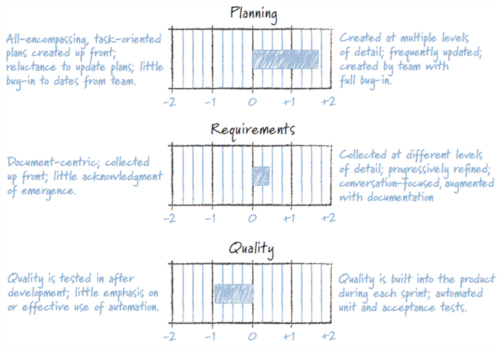

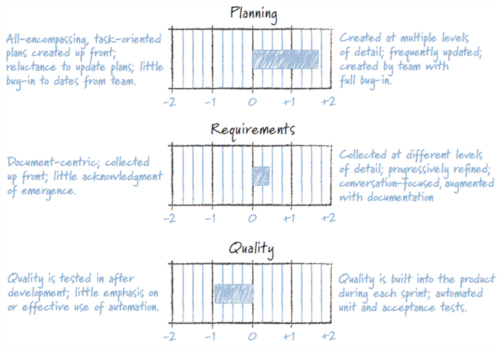

Partial results showing a team assessed on three dimensions are shown in Figure-1. This figure shows how one particular team compared to a population of other teams pulled from the CA database (in this case, other teams doing web development). Zero represents the mean value of all matching teams in the database. The vertical lines labeled from –2 to 2 each represent one standard deviation from the mean. From Figure-1 we can see that this team is doing much better than average at Planning, a little better than average at Requirements, and significantly worse than average at Quality.

Figure 1: The results of a Comparative Agility assessment show that this team is better than average at planning and requirements but worse at quality.

Each of the three dimensions shown in Figure-1 is composed of from three to six characteristics. A set of questions is asked to assess a team’s score on each characteristic. For example, characteristics of the planning dimension include

- When planning occurs

- Who is involved

- Whether both release and sprint planning occur

- Whether critical variables (such as scope, schedule and resources) are locked

- How progress is tracked

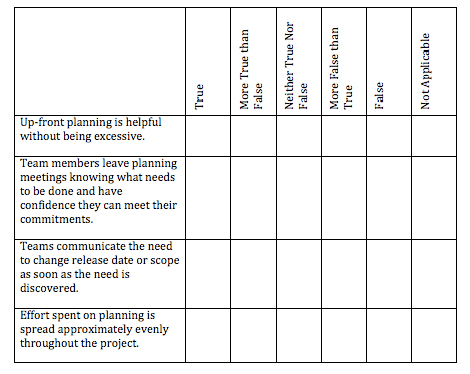

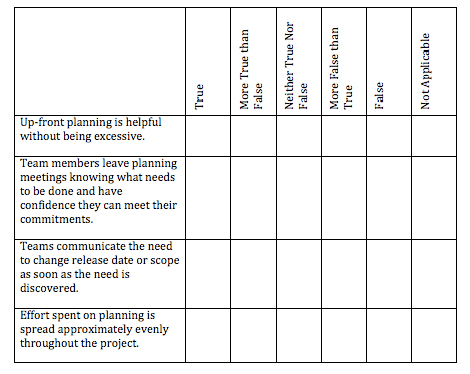

The questions for the “when does planning occur” characteristic are shown in Figure-2. As can be seen in that figure, questions are answered on a scale including true, more true than false, neither true nor false, more false than true, false, and not applicable.

Figure 2: One of the characteristics considered by the Comparative Agility assessment is when a team plans

Strengths and Weaknesses

A strength of the Comparative Agility assessment is that it was designed to lead to actionable results. Like the other assessments, the results point first to a shortcoming in one of the seven dimensions, but unlike other assessment approaches, drilling into that dimension reveals the specific characteristic the organization is struggling with. This should help the team or its ScrumMaster more easily identify actions to take. For example, if we were to look into the low score for quality in Figure 1, we would see that there are three characteristics to the quality dimension: automated unit testing, customer acceptance tests, and timing.

Because the CA approach assesses each characteristic individually, it is possible to see which of these characteristics is dragging down the organization’s overall quality score.

The comparative nature of the CA assessment was intended to be its biggest strength. By seeing how your organization compares with other organizations, improvement efforts can be focused on the most promising areas.

The most significant weakness of CA is the breadth of the survey. The survey includes over 100 questions about the development process. There are two common solutions:

- Perform a full assessment only once or twice a year. (Quarterly might be acceptable and relevant in some organizations.)

- Assess only one of the seven dimensions per month.

The Comparative Agility assessment is a proven way for teams and organization to check their progress, either against themselves at a prior time or against a comparative set of benchmark data. By using the results of a Comparative Agility Assessment to periodically reflect on what your team does well and could improve at, you will be able to help the team become more agile. This in turn will help the team achieve the real goal of delivering better products more quickly.

About the Author

Mike Cohn is the founder of Mountain Goat Software (http://www.mountaingoatsoftware.com), where he teaches and coaches on Scrum and agile development. He is the author of Succeeding with Agile: Software Development with Scrum, Agile Estimating and Planning, and User Stories Applied for Agile Software Development. Mike is a founding member of the Scrum Alliance and the Agile Alliance. He can be reached at www.mountaingoatsoftware.com.

Lets Hang!