Agile CMMI: KPIs for Agile Teams

articleToday's Agile teams contend with challenges that few development visionaries could have imagined when the foundations for Agile were first put in place. In this article, we will examine Key Performance Indicators (KPIs) that Agile teams can use to achieve transparency into key development processes, and fulfill the customer requirements of our maturing world.

1.0 - Coming of Age

Agile methods have truly come of age. The years since Jeff Sutherland's pioneering work on Scrum in 1993[i], and Kent Beck's creation of XP in 1996, have proven Agile's enduring qualities.

The world into which Agile has matured poses a new set of challenges to which Agile must now rise. We've moved from the physical bullpen to the virtual bullpen, with Agile teams contending with time zones, language barriers, and cultural distinctions. We've moved from coding guidelines to strict regulatory compliance. In fact, the development governance requirements for some industries is so strict that heavier-weight methodologies, with their emphasis on documentation, contracts and plans, begin to emerge as more attractive than their lighter-weight counterparts.

In a maturing environment, being better is not enough. We have to renew and reinvigorate our commitment to our customers, whether the external customer who buys our product, or the internal business unit counterpart who we serve. We have to embrace that same commitment to our management, the folks who fight for the budget and resources we need to accomplish our tasks.

A key component to this commitment is understanding new requirements in three areas: traceability, auditability, and compliance. It's not good enough to tout the benefits of Agile, we have to prove those benefits. And there's no better way than actively partnering with our customers and management alike, in a way that is meaningful to them.

2.0 - Process and KPIs

Software projects are usually evaluated in terms of either process or outcome. Agilists put special focus on process so that a successful outcome may be achieved, and strike a good balance between team and customer. But we also recognize that a process perspective is not, by itself, enough. To make a process really perform, we have to understand it, characterize it, benchmark it, and optimize it. KPIs are the power tools for accomplishing this; by using KPIs, we're able to enhance processes to superior outcomes.

Process distills to three basic elements: incoming materials, outgoing product, and the steps by which labor transforms one into the other. KPIs are there for us at every step of the way. They describe the quality of incoming and outgoing materials, the stability of the transformational steps, and the overall capability of the process to perform at expected levels.

We can learn from the parallels between Agile's coming of age and manufacturing. Industrial engineers began the formal study of process and KPIs in the 1950s. Over time, competition and tightening margins drove efficiency, often coupled with regulatory requirements around quality and safety. This resulted in manufacturers squeezing greater performance out of their processes, while at the same time being able to retain and audit what was done. Quality philosophy became increasingly refined, with tools to match. Inspection gave rise to statistical process control, which in turn empowered today's ultra-high-efficiency lean manufacturing environments. Because manufacturers invest in processes, applying KPIs to advances in assembly and materials technologies, the rest of us get to enjoy the high quality, high yield, short run, low cost consumer products they produce.

We can apply manufacturing's three key lessons to development iterations. First, if you are engaged in a process - any process - there are KPIs to tell you about the quality of the work products, and the profile of the process that produced them. Second, KPIs gain relevance and timeliness when they are automated. Third, mediating process transparency through KPIs enhances communication with management, suppliers, and customers.

3.0 - Timebox your KPIs

An Agile process transforms backlog into working software through transformational stages: design, programming, build and test. In a given timebox or sprint, the process is characterized by two principals measures: the volatility, or degree of churn, created by the team with respect to logic artifacts, such as code and test script; and the volume of the logic artifacts produced, such as the net number of source lines of code added, or the number of backlog work items addressed in that timebox. The quality of the work product as it moves through the process is given by assessing compliance with the team's engineering standards.

3.1 - Volatility

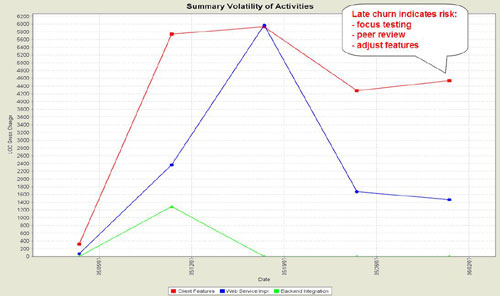

Volatility informs the team of the transactions taking place in the process. Visualizing process volatility from a variety of perspectives brings transparency, and permits lean development governance[ii]. Consider how much we can learn from the following graph, showing the churn associated with coding changes for a May 2007 timebox[iii], expressed as gross LOC changed per week for summary activities:

In general, we want to see coding changes calm down in advance of a release, to give sufficient time for final test (e.g. integration, acceptance, or production trial). Volatility graphs depict durations and magnitudes: "Backend Integration" completed in the second week, with modest code changes (1,300 LOC) relative to the other summary activities. "Web Service Impl" ramped up to a peak period in the third week; this is a desirable trajectory in a timebox, often likened to an aircraft flight, with a smooth glide plane to land at the code freeze date. "Client Features", on the other hand, is undergoing such a high rate of change in the final week that it is clear that extra scrutiny will need to be given to contain the risk introduced to the project by those late breaking changes.

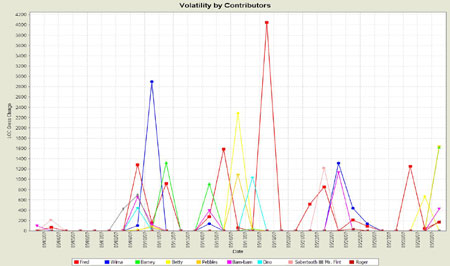

The next example shows how churn can also be examined in terms of who is contributing to the code base through the course of the timebox. This yields two valuable aspects of process: are programmers able to complete tasks in a timely manner, and is anybody making unauthorized changes to our project:

Contribution is not the same as productivity: there are many factors to programmer productivity beyond how many lines we code. However, if developers are unable to contribute work against backlog in a timely manner, then either they are blocked and need assistance (e.g. Barney seems to have gotten blocked before the halfway point), or the breakdown of coding tasks may be too coarse grained for real agility. Visualizing contribution support Agile methods by letting us put course corrections and mentoring in place early on, when they can do the most good.

There are many other forms of volatility analysis that yield insight into process: by stream (or branch); by framework; by type of logic artifact, to name a few. The important thing is to start with a small set of simple, pragmatic measures that make a difference, and build from there over time.

3.2 - Volume

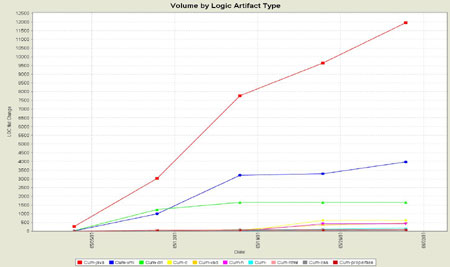

The volume KPI expresses the changing size of the logic artifacts over time. There are many uses for this KPI: volume is one of the principal correlations to future maintenance costs; OO efforts often want to see a reduction in volume at various stages, as classes are refactored, combined and collapsed; volume is fundamental to code coverage and defect density; and more.

The following example shows the net change in the volume of the different sort of logic artifacts undergoing change in our May 2007 sprint. In this case, we have a steady increase over time in the java, xml, and other sorts of things that make up the project:

When we begin to visualize volume changes, we become better tuned to process capabilities. How many developers does it take to review 12,000 net new LOC? Given prior defect density and the "law of large numbers", what can we expect in terms of new bugs found and not found in this new code? How many developers and which skills will it take to maintain institutional memory of this code for future maintenance efforts?

3.3 - Quality of Work Product

The essence of development governance is to assess the compliance of the work product against engineering standards. If you don't have standards, you have no basis for a quality program. If you have standards and no compliance assessment, you can't know where you stand with your quality program. The work product quality KPI opens the window into the process so that you can achieve real development governance.

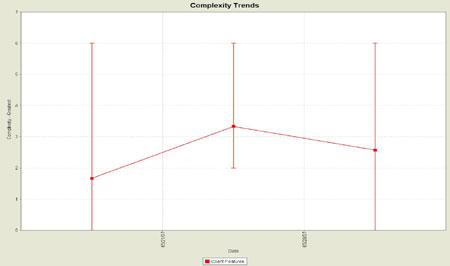

Most teams have standards, and they usually include coding guidelines, compatibility with language vendor guidelines and industry standards, and keeping a handle on code complexity. Here's the trend in the injection of complexity in our May 2007 sprint:

The graph shows the average complexity and range bars (min and max) for Java code changed each week. The team standard was to contain complexity to 10 or lower; we can see that the team complied with that standard, never exceeding 6. In other sprints, an uptick in complexity prompted peer review, enhanced unit testing to ensure coverage for the new execution paths, and even rework to re-express the logic in clearer, more maintainable terms.

4.0 - Tools and Automation

Change management tools can offer a platform for deriving the KPIs described above. Your ability to visualize capabilities is predicated on tool capabilities. The examples given here are all based on IBM Rational ClearCase UCM. Other examples of change management well suited both for Agile teams and deriving KPIs include AccuRev, Perforce, Subversion, and Rational Team Concert / Jazz.

Automation helps you get traction with KPIs by delivering timely reports without adding administrative burden to the team. Vendors like SourceIQ offer solutions that integrate with your existing change management infrastructure to automate the derivation and syndication of KPIs.

As you seek to apply KPIs, consider your process capabilities, engineering standards, and tooling together. You'll find a treasure trove of KPIs ready to be explored, baselined and shared. This empowers teams to achieve superior results and demonstrable compliance, even in a virtual bullpen. Transparency helps management and customers to understand the value of Agile in meeting their requirements.

[i] "Agile Development: Lessons Learned from the First Scrum", Dr. Jeff Sutherland

[ii] "Lean Development Governance Whitepaper", Scott Ambler and Per Kroll

[iii] All examples given are based on SourceIQ Enterprise Server analysis of a production IBM Rational ClearCase UCM environment. Where appropriate, names have been altered to protect privacy.

Lets Hang!